The Skills That Are Actually Safe

Everyone is telling you to learn prompt engineering.

That advice is six months out of date and the people giving it know it.

Prompt engineering was a skill in 2023 when you had to carefully construct inputs to coax useful outputs from early models. The models are better now. The skill of crafting prompts is being automated by the same tools that made it valuable. The job category that barely existed two years ago is already contracting.

So let’s talk about what’s actually happening to skills right now. Not what the LinkedIn influencers are saying. What the data shows.

The skills the data says are contracting

Computer programmers sit at the top of Anthropic’s exposure list. Not junior programmers. Not entry-level developers. Programmers generally. The research doesn’t soften this. Writing code, translating a requirement into working syntax, is the task AI has gotten best at, fastest. The job-finding rate for workers aged 22 to 25 entering AI-exposed occupations has fallen 14% since ChatGPT launched. That number is a leading indicator not a lagging one.

Customer service representatives are second. This one surprises nobody. Klarna’s AI handles the workload of 700 humans. That’s not a forecast. That’s a published number from a company that doesn’t need to impress anyone with it.

Data entry workers are third. Also unsurprising. What is surprising is that data analysis, the step above data entry, is following close behind. Not because AI can do analysis. Because AI has made the line between data entry and data analysis much harder to draw.

The University of Sydney published research this week confirming these three categories are most exposed. The researchers also confirmed something worth sitting with: even within the most exposed occupations, AI use is still limited. The mass displacement hasn’t arrived yet. What has arrived is the leading edge. The job-finding rate declining 14% is what a leading edge looks like in the data.

The skills the data says are appreciating

Judgment under uncertainty. The ability to look at something AI produced and know, not from a checklist but from depth, that it’s wrong. Not wrong in an obvious way. Wrong in the specific way that costs the company $200,000 if it ships.

This skill cannot be built by studying AI. It’s built by years of being in rooms where things went wrong, being held accountable for those failures, and developing the instinct that comes from having skin in the game repeatedly. AI is producing more output than ever. The humans who can evaluate that output accurately are becoming the scarcest resource in tech.

Stakeholder translation. The ability to sit across from someone who is frustrated and vague, understand what they actually need rather than what they said, and turn it into something buildable. AI can assist this conversation. It cannot replace the person who knows that “it feels slow” means “we’re about to churn.” That gap between human ambiguity and technical precision is still human work.

Accountability ownership. The willingness to own a result completely. To be the person whose name is on it when it breaks at 2am. This isn’t a technical skill. It’s a positioning decision. And it’s the one most tech workers are actively avoiding because it’s uncomfortable. The market is about to pay a significant premium for people who lean into it instead.

The contrarian read nobody is saying

Citadel Securities published a rebuttal this week arguing that actual AI adoption is slow, expensive, and nowhere near fast enough to meaningfully displace workers. They pointed to software engineering hiring being up 11% year on year.

They’re not wrong about the current data. They’re wrong about what the current data means.

Hiring up 11% for software engineers while companies simultaneously cut entry-level programmers from their payroll means the pyramid is changing shape. Not shrinking. Reshaping. Senior engineers who can own outcomes are in demand. Junior engineers who execute processes are not. The average is fine. The distribution is brutal.

The people reading Citadel’s “don’t worry” report and feeling reassured are the ones most at risk. Not because displacement is happening to everyone. Because it’s happening selectively. And the selection criteria are exactly the process versus outcome split.

What to actually do with this

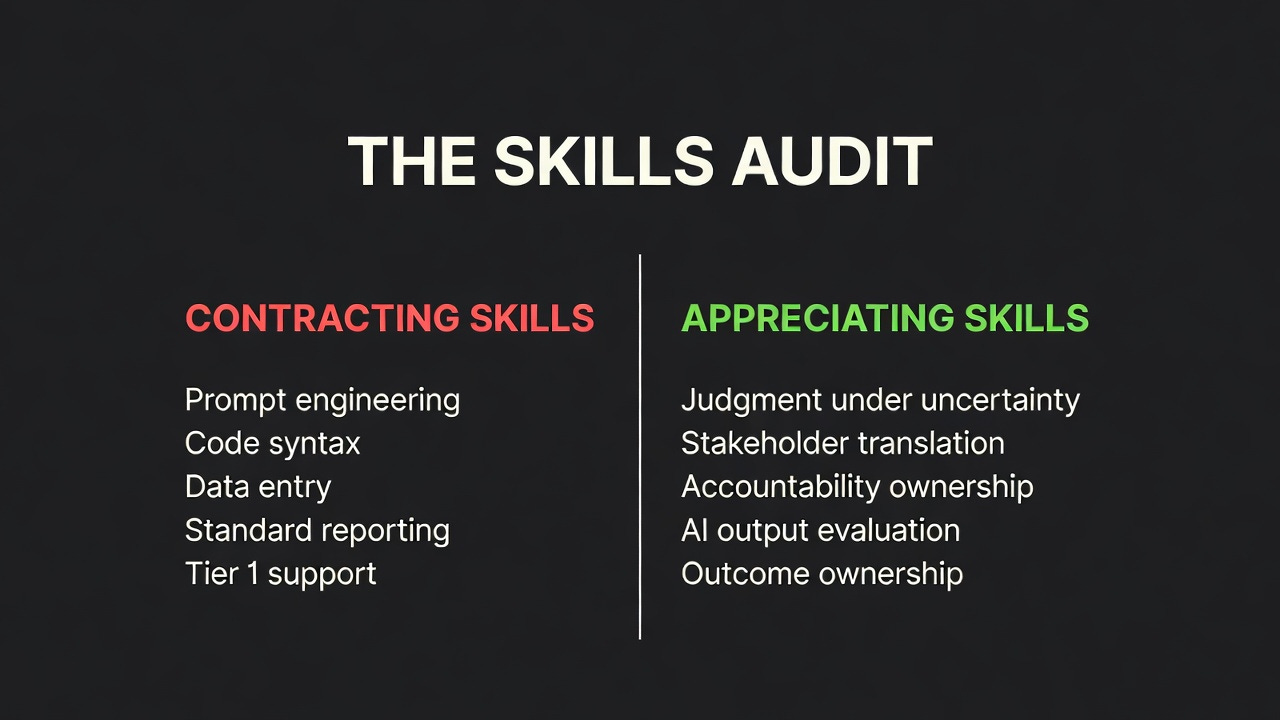

The skills audit is the only honest starting point. Not “what new tool should I learn.” That’s a proxy move. The real question is where you sit on the process to outcome spectrum right now.

Pull your last two weeks of actual work. For each task ask: am I executing a defined process or am I owning an outcome someone cares about when it breaks?

If more than half your tasks are process work, the skill building that matters isn’t prompt engineering. It’s moving toward accountability. Find one thing in your organization that nobody clearly owns when it fails. Own it. Make the result your name. Do this before your company does the same audit and draws its own conclusions about your role.

The skills that are actually safe right now aren’t the ones everyone is talking about learning.

They’re the ones that require being wrong in front of people, repeatedly, until you build the instinct that can’t be taught.

The skills AI can’t replace are the ones that hurt to develop.

I would add injecting human experience into writing to this.

For example, AI can't replace marketers.

Good marketing is about shared human experience.

And you can't fake lived experience.

High ownership is what most people struggle with and AI tools are incapable to handle, that's why it's quite long-term safe path